ChatGPT is a natural language processing tool driven by AI technology that allows you to have human-like conversations and is considered more advanced than the chatbot.

Ever since the launch in November 2022, there are lots of security concerns raised by the CISO organization and many organizations have blocked the usage of ChatGPT.

Resistance to use new inventions citing risk is quite common and, in this article, we are going to highlight those security challenges and guide you on how to address those.

60% of the organizations have explored ChatGPT in some form or other. In that 60% of organizations 12% use this technology extensively. The key use cases are for Analytics, marketing and analysis, research and development and fraud detection.

Here are some sample use cases for Healthcare and BFSI industries.

Healthcare

- Patient triage: ChatGPT can be used to triage patients’ health by asking the right questions about their symptoms and medical history to determine the urgency and severity of their condition.

- Virtual assistants for telemedicine: ChatGPT can be used to develop a virtual assistant to help patients schedule appointments, receive treatment details, and manage their health information.

- Medical recordkeeping: ChatGPT can be used to generate automated summaries of patient interactions and medical histories, which can help streamline the medical recordkeeping process. With ChatGPT, doctors and nurses can dictate their notes, and the model can automatically summarize key details.

- Remote patient monitoring: ChatGPT can be used to monitor patients remotely by analyzing data from wearable devices, sensors, and other monitoring devices, providing real-time insights of patients’ health.

BFSI

- Customer onboarding: It can help in reducing the time taken to onboard a client and simplify the KYC process by asking relevant and assist in guiding the users in a right way.

- Marketing: Banks can use ChatGPT to analyze customer data and build personalized marketing campaigns that target specific customer segments.

- Customer service: ChatGPT can assist the human agent in answering customer questions, improving efficiency and response time, and providing more accurate and detailed information. This can improve customer service and satisfaction and employee onboarding.

Here are some of the key issues that organization will face due to ChatGPT if proper security measures are not taken.

- Exposing Personally Identifiable Information (PII) or Personal Health Information (PHI) through chatbot conversation.

- Employees sharing organization’s classified information resulting in losing of competitive advantage.

- Generating malicious code which either sends the data to external entities or waits to create an attack at later point in time.

- Generating code that has malware which can infect the developer’s machine.

- Sophisticated phishing attacks

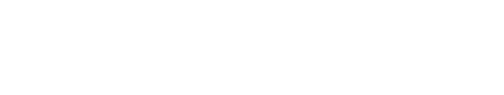

Organizations implementing ChatGPT use cases should define the purpose, understand the security/data privacy implications, and keep in mind that output is prone to bias. Here are some best practices to be considered while implementing ChatGPT –

- There should be human oversight resulting in context irrelevant information not being shared with users

- Ensure sensitive data like PHI or PII or financial data is not shared

- Validate if generated data is right and relevant

- It shouldn’t result in ethics, gender or racial biases

- Have a feedback mechanism where users can share their inputs and relevant ones are taken care of in the subsequent sprints

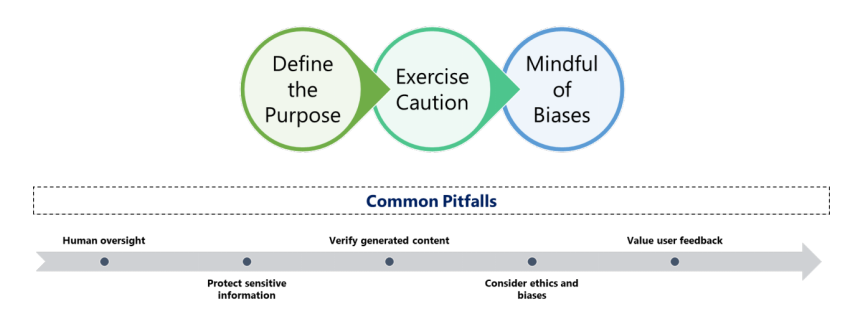

Security measures

Consider the following key security measures for the successful implementation of ChatGPT use cases.

Author

Kannan Srinivasan

Kannan has over 23 years of experience in Cybersecurity and Delivery Management. He is a subject matter expert in the areas of Cloud security, infra security including SOC, Vulnerability Management, GRC, Identity and Access Management, Managed Security Services. He has led various security transformation engagements for large banks and financial clients.